Author|Li Han Zhu Yue

Editor|Zhao Jian

(ChinaIT.com News) No one can imagine that in this round of AIGC wave, some AI companies have not had time to subvert the industry, but they themselves are about to be subverted.

On July 12, Dave Rogenmoser, the co-founder of the American AIGC unicorn Jasper, announced on the workplace social networking site LinkedIn, “In view of the huge changes in the industry, in order to concentrate and adjust resources, the team will start layoffs.” Jeremy Crane, Jasper’s head of product, also left earlier this month after less than a year in the job.

Founded in 2021, this unicorn just completed a financing valued at US$125 million in October last year and is one of the fastest growing companies in the AIGC field.

Also news of layoffs is the no-code AI marketing platform Mutiny, which has received investment from Sequoia Capital and Tiger Global Fund. Mutiny laid off about 30% of its workforce at the end of last month, former employees posted on LinkedIn.

AIGC’s battle for talent seems to be still yesterday. Why do these two companies, which are favored by giants and are developing vigorously, lay off employees at this time?

The answer has to do with ChatGPT. On November 30, 2022, OpenAI released ChatGPT. Only two months after its launch, its monthly active users have exceeded 100 million.(LLM)Startups building apps on top of that hit hard.

Zhu Xiaohu, managing partner of GSR Venture Capital Fund once said, “ChatGPT is too powerful and unfriendly to startups.”

Historically, startups have often been the masters of opportunity during periods of major technological change. In the current wave of generative AI, compared with large models that require a lot of investment, entrepreneurs have more entrepreneurial opportunities at the application layer. But when large-scale companies start to launch their own applications, how should application-layer start-ups deal with the relationship with the former, and how should they build their own moats?

In a recent discussion at the famous startup incubator Y Combinator, he directly asked the soul torture: “Will OpenAI kill all startups?”(Will OpenAI Kill All Startups)? “

The answer to this question not only determines the fate of small and medium startups, but also concerns the construction of a larger and more important AI ecosystem.

1. “Whales and Dolphins” in the AI Open Ocean

Before ChatGPT, Jasper was undoubtedly the most watched star unicorn in the AIGC field.

In 2020, OpenAI released GPT-3, the previous version of ChatGPT’s underlying large model, which caused quite a stir in the field of artificial intelligence. Dave Rogenmoser, the founder of Jasper, is keenly aware of the business opportunities contained in GPT-3. With the relationship of Y Combinator, the investor of the last entrepreneurial project, Jasper obtained access to GPT-3 in December 2020.

In the early days, GPT-3 had problems such as high learning costs and inconvenient calls, and could not directly communicate with users. Jasper conducts high-precision front-end prompts and interactive interface design on the basis of GPT-3, and then uses the team’s marketing experience to fine-tune the model. Jasper provides AI-generated text functions, and provides marketers with customized templates to automatically generate text content such as blog articles, news releases, and advertising copywriting, and is very popular among media workers and marketers.

The 2021 Jasper was an instant success when it was launched. In October of the same year, Jasper received $85 million in Series A financing. In 2022, Jasper’s annual revenue is expected to reach US$60 million, and it has reached a valuation of US$1.5 billion after only 18 months of establishment.

“They’ve got a huge opportunity,” said Thomas Laffont, Coatue Capital’s co-founder and senior managing director. “Jasper is in a leading position and there’s a clear product-market fit. They’ve also nailed a great set of investors with a variety of expertise.”

However, the birth of ChatGPT has changed everything.

GPT-3 is the backbone of Jasper’s business. In the early days of GPT development, Jasper and OpenAI were good partners. One side provided technology, the other side gave feedback, and both sides took what they needed. But the core technology is in the hands of the opponent, and Jasper is a secondary development based on GPT after all. For Jasper, who has no self-developed large model, no matter how brilliant the achievements are, this transaction is bound to be dangerous.

“While Altman has become a whale in the AI open ocean, Rogenmoser is more like a fish(remora), a finned fish that attaches to cetaceans and feeds on the remains. OpenAI needs partners like Jasper to pay the bills, but not nearly as much as Jasper relies on OpenAI. “The Information author Arielle Pardes commented.

The launch of ChatGPT fully exposed this risk. After two years of iteration and RLHF technical support, ChatGPT can not only understand user instructions, but also use natural language to communicate directly with users.

Simply put, there is no need for a “middleman” to facilitate the transaction between the big language model and the user.

At the same time, the free release of ChatGPT also made Jasper face a greater dilemma. Who pays $49 a month for Jasper when users can get ChatGPT for free?

Jasper is not the only company affected by ChatGPTall dotextCompanies with related products face similar risks.

For example, Grammarly is an AI writing assistant company with a valuation of over 10 billion US dollars. Soon after the release of ChatGPT, many users realized that ChatGPT’s powerful text generation function may cover the core competitiveness that Grammarly is proud of – excellent spelling and grammar review capabilities. Coupled with the huge price gap, Grammarly is no longer a cost-effective choice.

“I have been using Grammarly to help me revise my English for so many years, but ChatGPT is really powerful, much better than Grammarly. ChatGPT knows what I am talking about, but Grammarly doesn’t.” Said an international student.

Luis Ceze, CEO of OctoML, also stated that “if companies like Grammarly don’t find their own unique competitive model soon, they will soon be replaced by other text interfaces that integrate LLM”.

In order to cope with the impact of generative AI, Grammarly also launched a large language model tool GrammarlyGO in April this year, which has opened up functions such as quickly generating manuscripts, modifying text length, and replying to emails.

Grammarly CEO Rahul Roy-Chowdhury also said that “Grammarly is moving beyond the traditional business of revising and correcting text and into writing content”.

2. “Shell products” lose the market

It is difficult to have clear data on how many products are developed based on the GPT series or other large models. Like Jasper, some of the startups behind these products are in jeopardy.

Most of the companies that directly call the large model often do not have overly complicated business logic-users input information, call the fine-tuned large model for processing, and output information to the user, quickly completing a closed loop. It is confirmed to Jesper that the user inputs the requirements, and then processes an article to complete the output.

When a large model company performs model iterations or launches similar products, it is likely to easily cover or even exceed the functions and values of these products. Because, in essence, they are just “shell products” of large models.

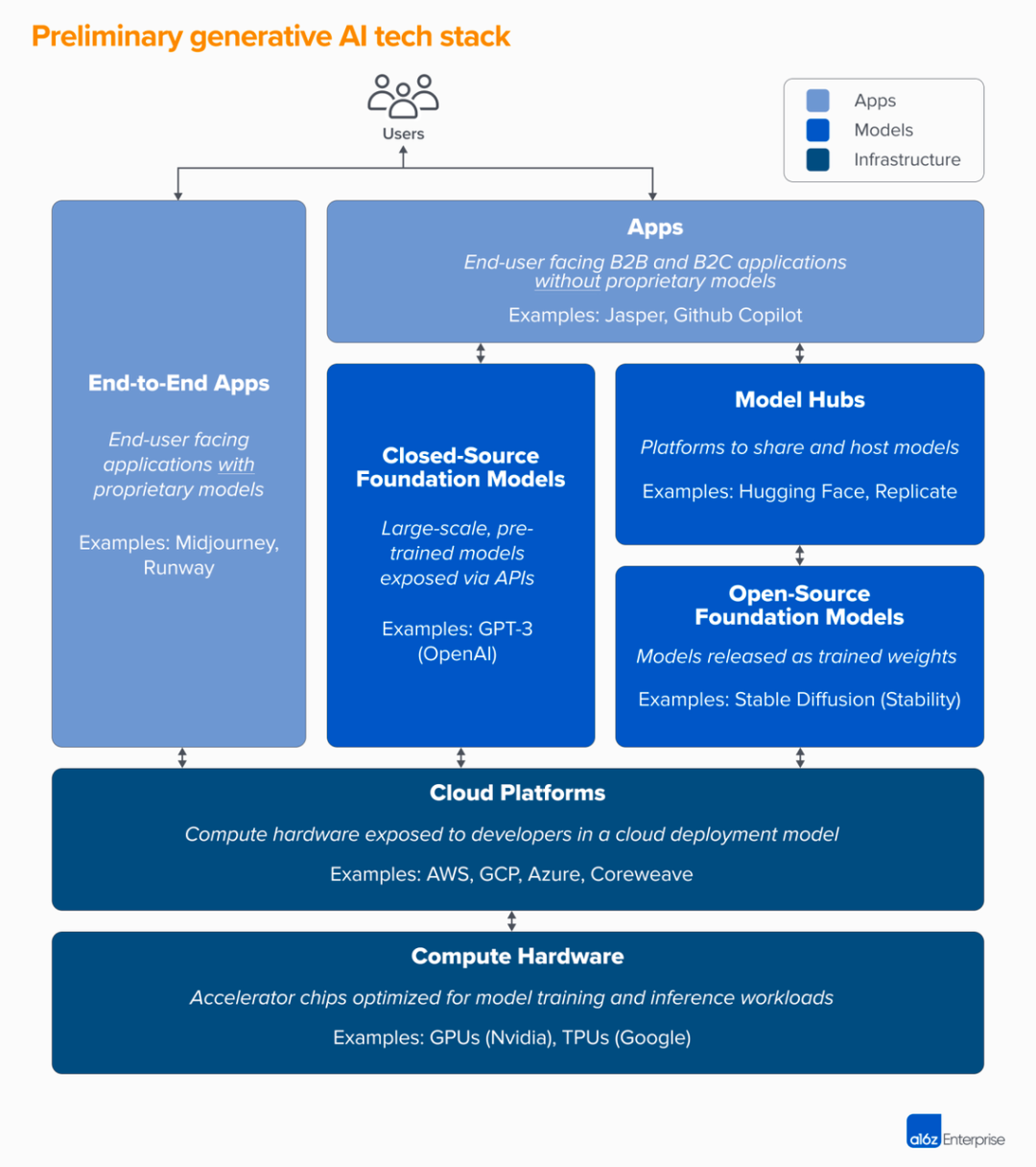

A16Z, a well-known venture capital institution in Silicon Valley, painted a picture of the current generative AI technology stack. Compared with applications with their own models, applications without models need to rely on the support of large-scale model companies. The competition between “Jaspers” and OpenAI is essentially a competition between the application layer and the model layer.

Li Zhifei, the founder of GoMask, once said in the circle of friends, “The release of ChatGPT sucked many shallow users away from Jasper.”

Generative AI technology stack

Image credit: A16Z

In the early days of development, relying on large-scale suppliers is a good way for application layer companies to start and even grow their businesses. But after taking shape, how to deal with the relationship between the model and the application has become a major problem that generative AI application companies have to face.

One view is that models as a service(Model as a Service), which is enough for a small development team to iterate quickly and switch model suppliers in time as the technology advances. Jasper has indeed done the same, and in addition to OpenAI’s model, Jasper also incorporates other open source models in its products, such as GPT-J, GPT-NeoX, T5 and BLOOM.

Another view is to retrain proprietary product data and develop a large model by yourself. Li Zhifei also mentioned, “Facing professional users, Jasper originally had a possible path, which is to build a large model by itself and develop unique product features that meet professional users, so as to retain professional users.”

But at the same time, he also believes that this is not an optimistic path. Self-developed large models have higher requirements for team funds and talents, and it is difficult for start-up companies to have the financial, material and human resources of technology giants. Moreover, in the United States, several top large-scale model companies represented by OpenAI have gradually occupied a dominant position, and some open-source large-scale models have basically completed ecological construction.

This is why there are so many start-up companies in the AIGC application field, but the big model is still the game of the giants.

More and more developers are raising the same concern. If they continue to use OpenAI’s API for application layer development, will OpenAI eventually release products that compete with them?

In addition to the chat robot ChatGPT, OpenAI has also released three products, the text generation image tool DALL-E, the natural language transcoding system Codex, and the automatic speech recognition system Whisper:

In January 2021, DALL E was released, which can generate images from natural language text descriptions. A year later, the second-generation DALL·E 2 with higher performance was unveiled, and it is now open to everyone.

Codex is the technical support of the AI code completion tool Github Copilot, which can convert simple English instructions into more than a dozen popular coding languages. It will be released through OpenAI’s API in August 2021.

Whisper is an automatic speech recognition system that can recognize and transcribe 99 languages. OpenAI open-sourced Whisper in September 2022, and released the API version of Whisper together with the ChatGPT API in March 2023.

However, it is now certain that OpenAI has paused the pace of launching more products.

In May of this year, OpenAI co-founder and CEO Sam Altman said in a closed-door discussion organized by Humanloop CEO,OpenAI will not release any more products outside of ChatGPT.

Sam Altman said that the great platform companies in history have a killer app. The vision of ChatGPT is to become a super-intelligent work assistant, and many other GPT use cases OpenAI will not touch.

In a Y Combinator discussion titled “Will OpenAI Kill All Startups?”, Y Combinator Managing Director Michael Seibel argued that “companies like OpenAI and Anthropic are actually trying to build AGI, not trying to build AI-driven CRM.”(Customer Relationship Management System)or better search or something”.

The impact of OpenAI and artificial intelligence on startups remains to be seen, but history shows that every period of major technological change, technological innovation usually creates greater opportunities for startups, rather than suppressing them.

In the browser era, the release of Netscape could not stop the development of Microsoft IE and Google; in the mobile Internet era, the birth of the iPhone opened the prelude to the rise of Meta, Uber and other mobile application manufacturers.

At present, what ChatGPT impacts is only the “shell product” based on the secondary development of GPT. The AI technology revolution brought by OpenAI will give birth to new amazing start-up companies.

3. Where is the AI application going?

According to incomplete statistics, within seven months after ChatGPT became popular, the number of large-scale models in the world has reached hundreds, and there are at least 80 in China alone. Today, the basic large-scale model has initially formed a “hundred-model war” pattern of Internet giants, AI technology companies, star start-up companies, academic research institutions and other forces.

A lot of funds, talents, and technologies have poured into the basic model, while the discussion on the application layer is much quieter. The large model is infrastructure, and it needs to be applied to create a relationship with users. This is a larger ecology.

Baidu founder, chairman and CEO Robin Li believes that generative AI will give birth to new products and new formats, and there will be a lot of entrepreneurship and investment. The biggest opportunity in the era of large models lies in the application layer. He once said, “At the application layer, there will be new entrepreneurial opportunities ten times that of WeChat and Douyin.”

AIChanges at the application layer are mainly divided into two categories. One is the common upgrade of existing products based on large models, which represent technology giants such as Microsoft, Salesforce, and Alibaba, as well as a large number of small and medium-sized software companies.

Microsoft has introduced OpenAI’s large-scale model capabilities into its entire series of products, including the New Bing search engine, Microsoft 365 Copilot, and Windows Copilot. DingTalk, a subsidiary of Alibaba in China, has also been transformed based on its Tongyi Qianwen model.

Traditional SaaS companies are rapidly accessing AI. In subdivided industries, AI products are mostly used in sales, consulting, management and other industrial scenarios to help companies build competition barriers. Take Salesforce, the world’s number one CRM vendor, as an example. Salesforce adheres to the AI+data+CRM strategy and will launch two AI products, EinsteinGPT and SlackGPT, in 2023.

The other is based onAINative applications for large models, but have not yet exploded.

Take the recently popular Agent as an example. As an intermediary between people and LLM, Agent may challenge the distribution mechanism of the original platform. Users no longer rely on the platform to use software, but directly interact with Agent to obtain services. In the past, SaaS may also become AaaS(Agent as a Service). The new product logic will further lower the threshold for users to use technology and penetrate into new usage scenarios.

Whether it is an existing Internet product or a growing AI native application, it is possible to experience a new round of reshuffle in the era of large models. Xiong Longfei, technical director of Jinshan Office, told “Jiazi Guangnian”: “The key is to build your own technical barriers.”

Both past accumulation and future-oriented investment are important. For example, in addition to the functional modules of the large model, Kingsoft Office has accumulated 35 years of document processing underlying technology, as well as AI capabilities that have been invested in research and development since 2017. In addition, in order to combine large models, Kingsoft Office has also specially developed basic technologies such as vectorization systems, prompt word managers, various AI capabilities, and large model plug-in systems.

The core barriers of application-layer companies lie not only in model capabilities, but also in product design, data management, and service networks.

Taking Grammarly as an example, some users told “Jiazi Guangnian”, “The main application scenarios of Grammarly include keyboard input methods, browser plug-ins and various terminal applications. ChatGPT may be used in essay writing or full-score composition, but Grammarly is more convenient for daily communication.”

With the development of model capabilities, the value of computing power may be wiped out at the application layer, and data is the long-term barrier. Dai Yusen, managing partner of ZhenFund, said in a previous speech that “when the quality and quantity of data increase, the performance and effect of the model will improve, and user barriers will also increase.” Taking WeChat as an example, the earliest WeChat had no barriers, and later formed a network effect through the unique relationship with many users, thus creating barriers.

In addition to the general scenarios that can be covered by large models, entrepreneurs who have accumulated deep and unique industry data in vertical fields, or are pioneers in unpopular fields, may be more likely to succeed.

The new wave of AI is no different from a big wave washing the sand. Only with core skills can we be invincible.

(Cover image source: The movie “The Hobbit: The Battle of the Five Armies”)

![[Day2]Panoramic VR takes you to the “cloud” of the World Manufacturing Conference](https://img.chinait.com/chinait-en/2021/11/193033.jpg)