NEWS

According to foreign media reports, the US Patent and Trademark Office recently granted Apple a patent for the Titan project: a light-field head up display that can be projected to the driver on the windshield information.

(ChinaIT.com News) Apple pointed out in the patent that vehicles such as cars are sometimes equipped with head-up displays, which can project images on the windshield of the vehicle, and the driver of the vehicle can view the projected image during driving. The head-up display is usually used to display vehicle status information, such as speedometer information. The head-up display allows the driver to safely display information without requiring the driver to look away from the road ahead.

In order to maximize the role of the head-up display in the vehicle environment, it is best to enhance the ability of the display to display information for the occupants in the vehicle. The head-up display can be a light field display, which produces a light field output that allows the viewer to observe the three-dimensional content on the head-up display.An array of light field display units and corresponding lenses can be used to direct the light field output to the viewer. The lens can direct the overlapping light field output of the display unit to the viewer, thereby forming an enlarged seamless light field viewing area on the car window.

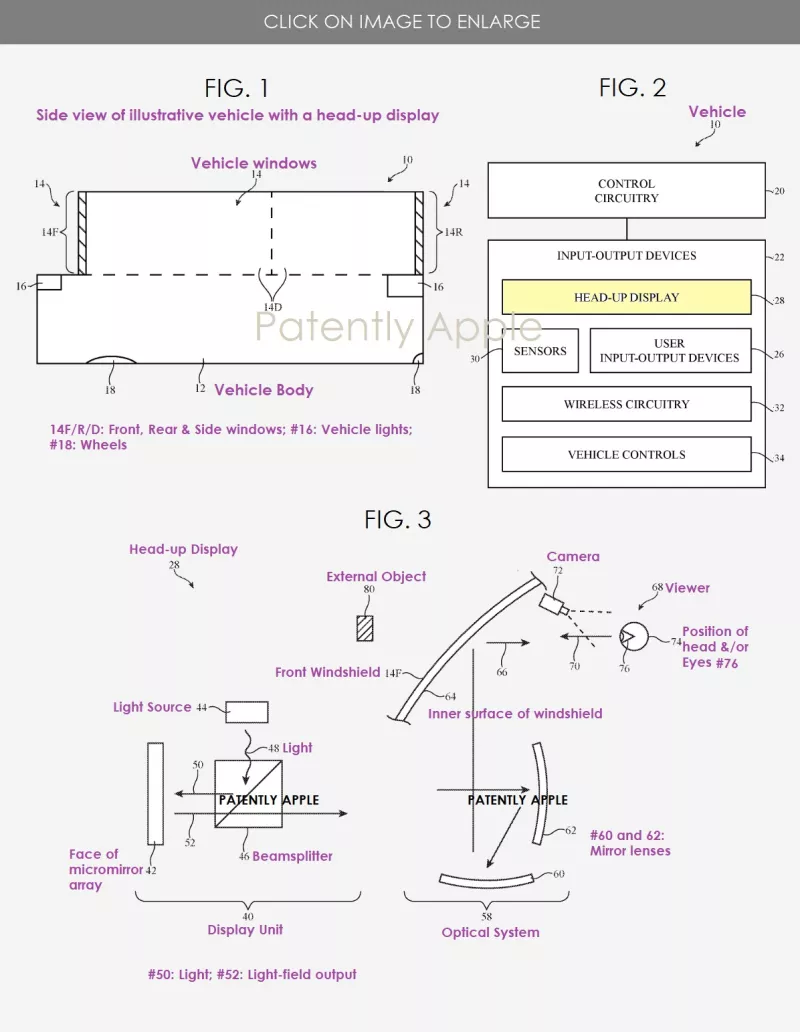

Image source | patentlyapple

Apple’s patent FIG. 1 is a side view of an illustrative vehicle with a head-up display; FIG. 2 is a schematic diagram of an illustrative vehicle or other system with a head-up display; and FIG. 3 is a side view of an illustrative head-up display in a vehicle.

01 Light field AR: the ideal “true AR HUD”

In recent years, the development of advanced driver assistance technology (ADAS for short) and augmented reality (AR for short) interactive technology has prompted some head OEMs at home and abroad to consider the development of AR HUD products that integrate virtual and reality, which has further promoted the innovation of HUD technology. As a result, the third-generation HUD product, AR HUD (Augmented Reality Head Up Display), is derived.

However, the realization of AR HUD technology for the fusion of virtual and reality is far more difficult than WHUD. It not only requires the development of multi-sensor fusion algorithms, but also requires spatial fusion algorithms and extreme system delay control, as well as 3D light field display with real spatial depth and distance (similar to 3D holographic display) technology.

The ideal light-field AR display technology can continuously project three-dimensional virtual information suitable for different distances and focus distances in the real world in front of the driver’s field of vision, so that the information in the virtual world and related objects in the real world can be carried out in the same spatial position. Display, to achieve the perfect integration of virtual and reality, not only can present the real AR augmented reality display effect, but also can completely solve the problem of vertigo caused by visual convergence. In other words, only this “true AR HUD” that perfectly integrates AR virtual imaging and real road scenes can create more value for users without affecting driving safety.

Light field AR HUD effect demonstration

02 3D animation: “Fake AR HUD” in reality

The industry generally believes that 2021 is the first year of AR HUD. Although Mercedes-Benz (S-class, including Maybach), Volkswagen, Audi and many other domestic and foreign OEMs have also launched mass-produced models with AR HUD functions, but due to suppliers’ Technical limitations have basically abandoned the original “real AR HUD” technical concept of perfect integration of virtual and real scenes.

The AR HUD products in the market now take the second place in terms of functions and effects. They are developed on the basis of ordinary WHUD hardware and superimposed on 2D or 3D UI animations, projecting the screen 7 to 10 meters in front of the line of sight. Left and right (4.5 meters or 10+ meters for individual models), according to the location of the vehicle, real-time navigation, ADAS and other animations and UI icons are played.

Specifically, the expressions of cutscenes are slightly different: (1) Mercedes-Benz’s cutscenes use a slightly more complex 3D perspective effect for better real-time rendering, with simple low-frequency optical image stabilization; (2) domestic ones The HUD industry has just started. Due to the limitations of technology, cost and cognition, although the hardware parameters of the international “fake AR HUD” were quickly copied, it adopted more primitive cutscenes and no optical image stabilization.

The expression of this “fake AR HUD” final interactive experience is a variety of UI animations floating in front of the driver’s line of sight, which will interfere with driving to a certain extent and deviate from the original intention of HUD products.

03 Why is the light field AR display difficult to achieve?

Wikipedia’s definition of AR includes three elements: (1) to ensure that key elements such as the shape and location of real and virtual objects are accurately perceived and presented, so as to achieve (2) the perfect integration of the real and virtual worlds, and (3) real-time interaction Effect.

The vehicle-mounted AR optical display system is more complicated than the AR head-mounted display. The eye box is tens of thousands of times larger, and the free-form windshield in the optical path will seriously affect the display quality (aberration, dynamic/static distortion, etc.). Objects that are several kilometers away are accurately perceived and presented, and merged in real time.

(1) Space coordinate fusion:If the AR HUD is projected at a fixed distance of a few meters away, it will not be able to achieve spatial fusion, which not only greatly compromises the experience, but also the optical distance gap is too large. If the virtual and real objects are forcibly merged, the driver will produce it in a short period of time. Visual convergence conflict, dizziness, nausea and other symptoms appear, affecting driving safety.

(2) Time coordinate fusion:Need to achieve millisecond-level delay control, which is one to two orders of magnitude higher than the delay requirements of automatic driving technology sensor fusion and decision-making algorithms.

Therefore, in-vehicle AR not only requires a multi-sensor fusion algorithm to accurately perceive the real world, but also requires 3D light field display technology to accurately present a light field-level virtual world, and combined with a spatial multi-coordinate system fusion algorithm to achieve perfect spatial coordinate system fusion. In addition, it needs to be combined with the “zero delay” fusion algorithm system to achieve perfect integration of time coordinates and complete real-time interaction. Obviously, this kind of accurate, real-time presentation and interaction is incomparable to fake AR without any fusion and cinematic level.

04 FUTURUS exclusive light field AR HUD system

In fact, the concept disclosed by Apple’s patent coincides with the technological layout of Future (Beijing) Black Technology Co., Ltd. (referred to as: FUTURUS) many years ago.

As a new technology cooperation supplier of several major international head car manufacturers, FUTURUS has carried out the research and development of the “real AR HUD” product that integrates virtual and real scenes through the technical layout many years in advance, adheres to the self-research route and patent layout, and developed a 3D-based product. “True AR HUD” optical system for light field.

FUTURUS light field AR HUD product demonstration

FUTURUS’s exclusive light field AR HUD system uses optical technology and a complete set of algorithm systems, instead of fake UI or cutscenes simulation, to achieve continuous zooming of the virtual image of the HUD picture from 4 meters to infinity, which can truly achieve optical-level virtual and real scenes The perfect fusion of roads.At the same time, it also solves many problems in the industry such as multi-sensor real-time fusion, multi-space coordinate system fusion, “zero delay” time coordinate system fusion, etc., and realizes zero-delay response from perception to display, making virtual driving assistance information and real physical The environment can be integrated in real time. In order to control costs and improve reliability and user experience at the same time, the sensors and computing hardware and software systems that realize all these complex data collections are based on the body’s own sensors and processors, which are not only more accurate and faster, but also greatly reduce the host The additional cost of the plant.

At present, FUTURUS has successfully completed the three generations of 3D light field AR HUD real car hardware. The modified real car has been optimized for actual road testing for 3 years. The system, algorithm and software have iterated hundreds of versions, which can be well matched. L2, L3, L4 level assisted driving requirements.

05 The end of the smart car based on augmented reality is coming

At the China Automotive Blue Book Forum on June 10-12, 2021, Mr. Xu Junfeng, the founder and CEO of FUTURUS, said that the digital twin Internet based on augmented reality technology will be the end of the development of smart cars.

Xu Junfeng shared at the 2021 China Automotive Blue Book Forum

With the popularity of AR glasses in 2022, it is expected that the Internet based on augmented reality and digital twins will rapidly become popular in 2022-2025. Internet giants such as Apple, Microsoft, Meta (the predecessor of Facebook), Google, Tencent, and Byte are all accelerating the deployment of augmented reality and digital twin Internet, and other Internet companies will also accelerate their transformation and integration. Apple cars, which will be launched around 2025, will directly access augmented reality and the digital twin Internet, leading the car to cross the mobile Internet and directly enter the digital twin Internet era.

It is foreseeable that the “third space” of future life in smart cars will no longer be limited to the physical space in the car itself. In the context of the increasingly mature autonomous driving technology, relying on the light field AR HUD technology, smart cars can realize people and cars. , The interconnection of the environment, this vehicle-mounted AR meta-universe contains great room for imagination and development.

06 Concluding remarks

Focusing on the huge commercial value that is about to explode, FUTURUS has also prepared a comprehensive product and technical layout. FUTURUS has many years of first-mover advantages in the field of in-vehicle augmented reality and digital twin technology, and currently has the most mature and leading products and technologies, and a complete patent layout. FUTURUS will also fully support traditional auto OEMs and new entrants in the automotive market to develop and produce panoramic light field AR HUDs, so that they can successfully implement a new generation of digital transformation and seize the opportunity in the coming digital twin Internet era.

Regarding the technical implementation and business logic of the next-generation augmented reality and digital twin Internet, FUTURUS will continue to publish special articles for detailed interpretation in the follow-up, so stay tuned!

END

Articles on the ChinaIT.com website are limited to providing more information and do not represent the standpoint of this website. For reprint, please indicate the source. The reprinted article comes from the Internet. If you have any copyright issues, please contact us: content@chinait.com.

.

![[Day2]Panoramic VR takes you to the “cloud” of the World Manufacturing Conference](https://img.chinait.com/chinait-en/2021/11/193033.jpg)